Building Quorum: Architecture of a Multi-Tenant Approval Engine in Go

Every enterprise project I've worked on has needed some version of maker-checker. A fund transfer that needs two sign-offs. A user provisioning request that routes through compliance. An expense claim that climbs the management chain before hitting finance. The pattern is everywhere, and every team builds it from scratch.

I've watched this play out across organizations and stacks. A fintech startup wires up a basic approve/reject flow in their monolith. A bank builds an elaborate approval system with five abstraction layers. A SaaS company bolts request approvals onto their admin panel as an afterthought. Each one solves roughly the same problem, each one takes weeks or months, and each one ends up with the same gaps: no webhook delivery guarantees, no concurrent approval handling, no multi-stage support.

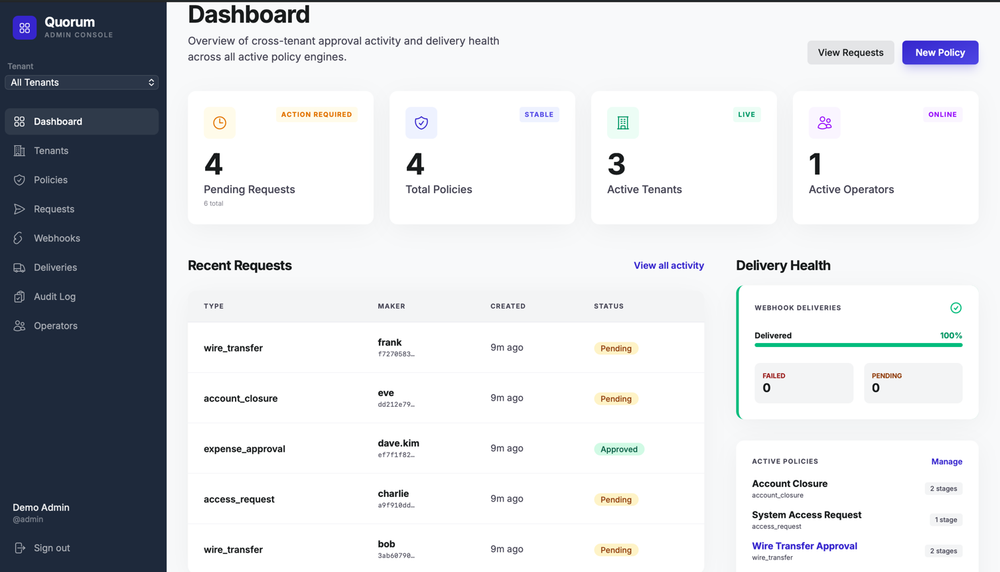

Quorum exists because approval workflows shouldn't require a custom-built system every time. It's a standalone, multi-tenant approval engine that any application can integrate with via REST API. This post covers the architecture, the design decisions, and the problems I had to solve along the way.

System Overview

Quorum is a Go HTTP server backed by PostgreSQL (or MSSQL). Consumer applications talk to it via REST: create approval requests, submit decisions, register webhooks. Operators manage the instance via an admin console built with Svelte 5. Embeddable web components let consumer apps drop approval UIs directly into their frontends, with real-time updates via Server-Sent Events.

The core data model has four main entities: Policies define the approval structure for a request type. Requests are the things being approved. Approvals are individual checker votes. Webhooks notify consumer applications when request status changes.

The startup sequence in main.go follows strict dependency injection: config loading, database pool creation, store layer instantiation (eight store implementations), service composition with cross-wiring, background worker initialization, and finally the HTTP server with its middleware stack. Every dependency is explicit; no global state, no init functions.

The Vote Evaluation Engine

The approval flow is where the interesting problems live. A request passes through sequential stages, each requiring a configurable number of approvals before advancing. The tricky part isn't recording votes; it's determining outcomes correctly under concurrency.

The State Machine

A request has five states: pending, approved, rejected, cancelled, expired. Only pending is non-terminal. The transition rules:

pending -> approved: Final stage's approval threshold metpending -> rejected: Rejection policy triggered at any stagepending -> cancelled: Maker cancels their own requestpending -> expired: Background worker detectsexpires_at <= NOW()

No other transitions are valid. A terminal state is permanent.

Stage Configuration and Rejection Policies

Each stage defines required_approvals, allowed_checker_roles, allowed_permissions, an authorization_mode, and a rejection_policy. The rejection policy is per-stage, which matters in practice: you might want strict single-rejection rules for compliance stages but allow minority dissent for committee stages.

The any policy rejects the entire request on a single rejection at that stage. The threshold policy only rejects when there aren't enough remaining checkers to meet the approval threshold. With required_approvals: 3 and max_checkers: 5, two rejections still leave three possible approvals. Three rejections make the threshold unreachable, triggering rejection.

The Evaluation Function

Vote evaluation is implemented as a pure function with no database access, no side effects. It takes vote counts, the current stage index, and the policy, then returns a (*RequestStatus, *int) tuple:

(*StatusRejected, nil)-- Terminal rejection(*StatusApproved, nil)-- Terminal approval (final stage passed)(nil, &nextStage)-- Advance to next stage(nil, nil)-- No action yet, waiting for more votes

The algorithm checks rejection first:

1. Load current stage definition from policy

2. If rejection_policy is "any" and rejectionCount > 0 -> REJECTED

3. If rejection_policy is "threshold":

remaining = maxCheckers - approvalCount - rejectionCount

if approvalCount + remaining < requiredApprovals -> REJECTED

4. If approvalCount >= requiredApprovals:

if this is the last stage -> APPROVED

else -> advance to (currentStage + 1)

5. Otherwise -> no action (nil, nil)

Making this a pure function was deliberate. It's trivially testable: feed in counts and assert outcomes. No mocking required. The database interaction happens in the layer above, and the evaluation function doesn't need to know or care about transactions, locks, or retries.

Serializing Concurrent Approvals

The hardest problem in an approval engine isn't the UI or the API design. It's handling two checkers who approve at the exact same moment.

The Race Condition

Without serialization, two concurrent approvals can both read the same vote count, both conclude the threshold is met, and both attempt a stage advance. Or worse, both conclude the threshold isn't met (because neither sees the other's vote) and neither advances the stage. The request gets stuck.

The Transaction Design

The decision processing function wraps the entire critical section in a database transaction:

BEGIN TRANSACTION

1. SELECT ... FROM requests WHERE id = $1 FOR UPDATE

-> Acquires exclusive row-level lock

-> Second concurrent transaction blocks here until first commits

2. Re-read current_stage from locked row

-> Stage may have advanced since the request was first loaded

3. INSERT INTO approvals (request_id, checker_id, decision, stage_index, ...)

-> Record the vote inside the lock

4. SELECT COUNT(*) FROM approvals WHERE request_id = $1 AND stage_index = $2

-> Count votes while holding the lock (guaranteed accurate)

5. evaluateWithCounts(approvalCount, rejectionCount, currentStage, policy)

-> Pure function determines outcome

6. If terminal status:

UPDATE requests SET status = $1 WHERE id = $2 AND status = 'pending'

INSERT INTO webhook_outbox (...) <- Enqueue webhooks atomically

If stage advance:

UPDATE requests SET current_stage = $1 WHERE id = $2 AND status = 'pending'

COMMIT

The FOR UPDATE on the request row is the serialization point. When two checkers approve simultaneously, the first acquires the lock and proceeds. The second blocks on the SELECT ... FOR UPDATE until the first transaction commits. By the time the second checker's transaction continues, it sees the updated vote count and makes the correct decision.

The Compare-and-Swap Safety Net

Even with row locking, the UpdateStatus and UpdateStageAndStatus methods include a WHERE status = 'pending' clause. If RowsAffected() == 0, the request was already resolved by another writer. This CAS pattern provides a lock-free safety net against double terminal transitions and works correctly even under connection retries. If a client retries a commit that actually succeeded, the CAS guard prevents a duplicate state change.

Pre-Lock Validation

Before entering the transaction, the service performs validation that doesn't need serialization: checking the request exists, verifying it's still pending, confirming the checker isn't the maker, validating roles and permissions, checking the eligible reviewers whitelist, and calling the external authorization hook if configured.

These checks run against a non-locked read. If they pass, the system enters the transaction. If the request was resolved between the pre-check and the lock acquisition, the CAS guard catches it. This keeps the lock held for the minimum necessary duration.

Testing Concurrency

I test the concurrent approval path at two levels. A service-level unit test launches five goroutines, each calling Approve() with a different checker ID. A sync.Mutex inside the mock RunInTx simulates database serialization: the first caller records the vote and triggers terminal status; subsequent calls see ErrStatusConflict. After all goroutines finish, exactly one succeeded.

An integration test does the same thing against a real PostgreSQL instance (via testcontainers), using actual FOR UPDATE locks. Five goroutines each run a full transaction: GetByIDForUpdate, create approval, UpdateStatus. The database's own row-level locking ensures only one wins. This validates the CAS guard and lock behavior end-to-end.

One subtle bug I hit: the mock GetByIDFunc was returning the same pointer to all goroutines. When one goroutine mutated req.Status after the transaction, others read the mutated value. The race detector caught it. The fix: r := *req; return &r, nil.

Multi-Layer Authorization

Deciding who can approve is nearly as complex as deciding what happens when they do. Quorum evaluates multiple layers before allowing a checker to act.

Roles, Permissions, and Authorization Modes

Each approval stage can define allowed_checker_roles and allowed_permissions. A checker's identity carries both, extracted from headers by the auth middleware (X-User-Roles, X-User-Permissions, comma-separated).

When a stage has only roles or only permissions, the validation is straightforward: check the relevant list. When both are present, the authorization_mode field determines how they combine:

func validateAuthorization(stage *model.ApprovalStage, checkerRoles, checkerPermissions []string) error {

hasRoles := stage.AllowedCheckerRoles != nil

hasPermissions := stage.AllowedPermissions != nil

if !hasRoles && !hasPermissions {

return nil

}

if hasRoles && !hasPermissions {

return validateCheckerRoles(stage, checkerRoles)

}

if !hasRoles && hasPermissions {

return validateCheckerPermissions(stage, checkerPermissions)

}

// Both are set — use authorization_mode

switch stage.AuthorizationMode {

case model.AuthModeAny:

roleErr := validateCheckerRoles(stage, checkerRoles)

permErr := validateCheckerPermissions(stage, checkerPermissions)

if roleErr == nil || permErr == nil {

return nil

}

return roleErr

case model.AuthModeAll:

if err := validateCheckerRoles(stage, checkerRoles); err != nil {

return err

}

return validateCheckerPermissions(stage, checkerPermissions)

default:

return validateCheckerRoles(stage, checkerRoles)

}

}

any means satisfy either roles OR permissions. all means both. If both fields are present but authorization_mode is omitted, the policy is rejected at creation time. Silent misconfiguration caused too many debugging sessions.

The Eligible Reviewers Whitelist

A request can optionally carry an eligible_reviewers list: specific user IDs allowed to act on this particular request. This is a per-request override, set by the maker at creation time. When present, a checker must appear in this list in addition to satisfying role/permission requirements:

if len(req.EligibleReviewers) > 0 && !slices.Contains(req.EligibleReviewers, checkerID) {

return nil, ErrNotEligibleReviewer

}

This handles cases where the approval pool is dynamic. An expense claim might only be reviewable by the submitter's direct manager and the finance team lead, not every user tagged manager in the system.

Dynamic Authorization Hooks

For authorization logic that lives outside Quorum, in the consumer application's own permission system, stages can define a dynamic_authorization_url. Before processing a decision, Quorum POSTs the request details, checker identity, and maker identity to this URL. The external system responds with {"allowed": true/false, "reason": "..."}.

Both request and response are HMAC-SHA256 signed when a per-policy secret is configured. The response signature is verified with hmac.Equal for constant-time comparison. The request body is read into a buffer first with io.ReadAll so it can be used for both signature verification and JSON decoding.

The dynamic hook is intentionally skipped on the read path. When a widget asks "can this viewer act?", the viewer_can_act computed field evaluates all local checks (roles, permissions, eligible reviewers, duplicate vote detection) but doesn't make an HTTP call. This keeps GET requests fast and free of side effects.

The Full Authorization Chain

When a checker submits a decision, validation runs in order:

- Request exists and is pending

- Checker is not the maker (self-approval blocked)

- Checker hasn't already voted on this stage

- Roles satisfy the stage's

allowed_checker_roles - Permissions satisfy the stage's

allowed_permissions - Authorization mode combines the role/permission results

- Checker is in

eligible_reviewers(if set) - Dynamic authorization hook returns

allowed: true(if configured)

Only after all checks pass does the system enter the transaction and record the vote.

Durable Webhooks: The Outbox Pattern

The first version of webhook delivery was simple: approve a request, fire an HTTP POST to the webhook URL, done. This fails in two ways that aren't obvious until production.

Crash between commit and delivery. The database transaction commits (request is approved), the server crashes before the HTTP call, the consumer never gets notified. State and notifications permanently diverge.

Delivery failure after commit. The HTTP call fails (consumer is down, network blip). The request is approved in the database, but should it be rolled back? Of course not: the approval is valid regardless of webhook delivery. Now you need retry logic, and you need to track what was delivered and what wasn't.

The outbox pattern solves both by making webhook entries part of the same transaction as the state change.

Transactional Enqueue

When a request transitions to a terminal state, the service finds all matching webhooks (filtered by event type, request type, and tenant), constructs the payload, and inserts outbox entries, all within the same database transaction as the status update. If the transaction rolls back, the webhook entries vanish too. If it commits, the entries are guaranteed to exist.

State change + webhook entries = one atomic unit

The service layer accomplishes this by passing store interfaces into a transaction callback:

requestService.SetWebhookDispatch(stores.RunInTx, dispatcher.Enqueue, dispatcher.Signal)

RunInTx wraps a function in a database transaction, passing transactional store instances to the callback. Enqueue writes outbox entries using the transactional outbox store. Signal wakes the dispatcher after commit.

The Dispatcher: Signal + Heartbeat Hybrid

A background goroutine picks up pending outbox entries and delivers them. It uses two trigger mechanisms.

Signal-driven wake. After the transaction commits, the service calls dispatcher.Signal(). The dispatcher's run loop receives on a channel and immediately processes a batch. This delivers webhooks within milliseconds of the state change.

func (d *Dispatcher) Signal() {

select {

case d.signal <- struct{}{}:

default: // channel already has a signal, skip

}

}

The non-blocking send prevents callers from blocking if the dispatcher hasn't consumed the previous signal. The default case matters: a missed signal is harmless because the heartbeat will catch it.

Heartbeat sweep. A ticker fires every 30 seconds (configurable) and triggers batch processing. This catches entries missed due to a signal being dropped between commit and signal call, for example if the process restarts after the transaction commits but before Signal() is called.

select {

case <-ctx.Done():

return

case <-d.signal:

d.processBatch(ctx)

case <-ticker.C:

d.processBatch(ctx)

}

Distributed Batch Claiming

In multi-instance deployments, multiple dispatchers are running. Each one must claim entries without duplicating delivery. The claim query uses FOR UPDATE SKIP LOCKED:

UPDATE webhook_outbox

SET status = 'processing', next_retry_at = NOW() + INTERVAL '5 minutes'

WHERE id IN (

SELECT id FROM webhook_outbox

WHERE (status = 'pending' OR status = 'processing')

AND next_retry_at <= NOW()

ORDER BY created_at ASC

LIMIT 50

FOR UPDATE SKIP LOCKED

)

RETURNING ...

SKIP LOCKED is the key: if another worker already locked a row, skip it instead of waiting. Each worker gets a non-overlapping batch. The 5-minute lease (next_retry_at = NOW() + 5 minutes) handles worker crashes: if a worker claims entries and dies, those entries become reclaimable after 5 minutes.

Delivery, Retry, and SSRF Protection

Each entry is delivered via HTTP POST with an HMAC-SHA256 signature in the X-Signature-256 header. The consumer can verify payload authenticity without shared sessions.

On success (2xx response), the entry is marked delivered with a timestamp. On failure, retry kicks in with exponential backoff and jitter:

delay = retryDelay * 2^(attempts - 1)

delay = min(delay, maxRetryDelay)

delay = delay +/- random(20%)

nextRetry = now + delay

With a 5-second base delay and a 1-hour cap (defaults), the sequence is approximately: 5s, 10s, 20s, 40s, 80s, ... up to 1 hour between attempts. The +/-20% jitter prevents thundering herd when multiple entries fail simultaneously.

Permanent failure triggers when either condition is met: the attempt count exceeds maxRetries, or the retryWindow (default 72 hours) has elapsed since the entry was first created. The retry window adds a wall-clock deadline: even if individual retries haven't been exhausted, an entry that's been failing for three days is marked permanently failed. An audit log records the failure.

Webhook URLs present an SSRF risk. An attacker could register http://169.254.169.254/latest/meta-data/ as a webhook URL and harvest cloud metadata. A custom net.Dialer resolves hostnames and checks every resolved IP against a blocklist before connecting: IPv4 loopback (127.0.0.0/8), RFC 1918 ranges (10.0.0.0/8, 172.16.0.0/12, 192.168.0.0/16), link-local (169.254.0.0/16), and their IPv6 equivalents. Checking at connection time rather than registration time is critical because DNS records can change after a webhook is created.

Outbox Management API

Failed deliveries need operator visibility. The admin console exposes endpoints for listing outbox entries (filtered by status, request ID, tenant), viewing aggregate stats (counts by status), retrying individual failed entries, and bulk-retrying all failed entries for a given request. Retry resets the entry to pending and signals the dispatcher for immediate reprocessing.

Retention Cleanup

Delivered entries accumulate in the outbox table. A periodic cleanup process (running at 10x the heartbeat interval, capped at 1 hour) deletes entries older than the configured retention period. Setting retention to zero disables cleanup entirely.

Multi-Tenancy: Column-Level Isolation

I evaluated three approaches.

Database-per-tenant. Maximum isolation, but requires dynamic connection management and makes cross-tenant operations (like the admin console) complex. Overkill for Quorum's use case.

Schema-per-tenant. Better, but still requires dynamic schema management, migration coordination across schemas, and complicates connection pooling.

Column-level isolation. A tenant_id column on each table, with every query scoped by tenant. Simple migrations, uniform query patterns, single connection pool.

I went with column-level. The tradeoff is that every query must include tenant scoping, which I enforce at the store layer by reading from context:

tenant := auth.TenantIDFromContext(ctx)

if tenant != "" {

query += " AND tenant_id = $2"

args = append(args, tenant)

}

When the tenant is empty (console routes, background workers), the query runs unscoped. This dual behavior is intentional: the API always has a tenant in context, the console optionally filters by tenant, and background workers process all tenants.

Explicit Registration, No Defaults

I considered falling back to a default tenant when X-Tenant-ID is missing. This creates a silent data-mixing risk: a misconfigured consumer app without the header would dump data into an implicit bucket. Instead, the auth layer returns an error if the header is absent, and the validation middleware returns 403 for unregistered slugs.

Tenants are explicitly created by operators via the console. No tenants are seeded by default. Operators must register at least one before consumers can make API calls.

The Caching Problem

Tenant validation runs on every API request. The naive implementation calls tenants.GetBySlug(ctx, tenantID), a database query per request. Under load, this becomes measurable overhead.

The solution is an in-memory set of registered tenant slugs:

type TenantService struct {

tenants store.TenantStore

mu sync.RWMutex

slugs map[string]struct{}

}

func (s *TenantService) IsRegistered(slug string) bool {

s.mu.RLock()

defer s.mu.RUnlock()

_, ok := s.slugs[slug]

return ok

}

Using map[string]struct{} instead of map[string]bool is deliberate. An empty struct takes zero bytes per entry, and the type signals intent: this is a set, not a lookup table. I don't need to store tenant data, just whether a slug exists.

The cache is populated at startup via LoadCache() and maintained inline: Create() adds the slug, Delete() removes it. For multi-instance deployments, a background goroutine periodically calls LoadCache() to reconcile with the database (default: every minute). Inline updates handle same-instance consistency; the periodic refresh handles cross-instance consistency.

The Tenant Context Pipeline

The request flows through a layered context enrichment:

Request arrives with X-Tenant-ID: acme-corp

|

authMiddleware -> TrustProvider extracts header -> sets Identity.TenantID in context

|

tenantValidationMiddleware -> reads tenant from context -> checks IsRegistered()

-> 403 if not found

|

Handler -> calls service layer

|

Service -> calls store layer

|

Store -> reads auth.TenantIDFromContext(ctx) -> adds WHERE tenant_id = $N

Every table except approvals (inherits tenant scope via foreign key to requests), webhook_outbox (processed globally), and operators (global admins) has a tenant_id column.

The uniqueness constraints are tenant-scoped too. UNIQUE(tenant_id, request_type) on policies means two tenants can both have a "wire_transfer" policy. The fingerprint index is (tenant_id, type, fingerprint) WHERE status = 'pending', preventing cross-tenant deduplication interference.

Fingerprint Deduplication and Idempotency

Quorum has two complementary mechanisms for preventing duplicate requests. They solve different problems and can both be active on the same request.

Idempotency Keys

Clients can include an Idempotency-Key header when creating a request. The service checks this key before doing anything else: if a request with that key already exists, it returns the existing request immediately. This protects against network retries. If a client's POST times out and it retries, the second call returns the already-created request instead of creating a duplicate.

Idempotency is checked first because it's the cheapest operation and the most common retry scenario.

Content Fingerprinting

When a policy defines identity_fields, Quorum computes a content-based fingerprint to prevent duplicate pending requests for the same logical entity. This is business-level deduplication: "there's already a pending transfer for this account."

The computation is deterministic: sort field names alphabetically, extract values from the payload, serialize as canonical JSON, SHA-256 hash, hex-encode. The same payload always produces the same fingerprint regardless of JSON key ordering.

sort.Strings(identityFields)

values := make(map[string]any)

for _, field := range identityFields {

values[field] = data[field]

}

canonical, _ := json.Marshal(values)

hash := sha256.Sum256(canonical)

return fmt.Sprintf("%x", hash), nil

The duplicate check queries a partial index: WHERE tenant_id = $1 AND type = $2 AND fingerprint = $3 AND status = 'pending'. Only pending requests block. Once a request reaches a terminal state, new requests with the same fingerprint can be created. A rejected fund transfer can be re-submitted.

Real-Time Updates via SSE

Approval workflows are inherently asynchronous; a checker might not act for hours. But when they do act, the maker's UI should update immediately. Polling wastes resources. WebSockets are overkill for a one-directional status stream. Server-Sent Events hit the right balance.

The Pub/Sub Hub

An in-process hub manages subscriptions keyed by request UUID. When a request's state changes, the service publishes a signal. Each subscriber has a buffered channel (capacity 1) for event coalescing: if multiple state changes happen before a subscriber reads, it receives a single notification and re-fetches the full state via REST.

type Hub struct {

mu sync.RWMutex

subs map[uuid.UUID]map[*Subscriber]struct{}

}

func (h *Hub) Publish(requestID uuid.UUID) {

h.mu.RLock()

defer h.mu.RUnlock()

for sub := range h.subs[requestID] {

select {

case sub.ch <- struct{}{}:

default: // coalesce: already has a pending signal

}

}

}

The non-blocking send matches the same pattern used in the webhook dispatcher's signal channel. It's a recurring idiom in Quorum: never block a writer, let the reader catch up on its own schedule.

The SSE Handler

The handler at /api/v1/requests/{id}/events subscribes to the hub and streams events. If the request is already terminal when the connection opens, it sends a single status event and closes; there's nothing to wait for.

For pending requests, the handler enters a long-lived event loop with a 30-second keepalive heartbeat (SSE comment lines). The keepalive serves dual purpose: it prevents proxies from closing idle connections, and it lets the widget detect connection staleness.

rc := http.NewResponseController(w)

sub := h.hub.Subscribe(id)

defer h.hub.Unsubscribe(id, sub)

keepalive := time.NewTicker(sseKeepaliveInterval)

defer keepalive.Stop()

for {

select {

case <-r.Context().Done():

return

case <-sub.C():

if err := rc.SetWriteDeadline(time.Now().Add(sseKeepaliveInterval + 15*time.Second)); err != nil {

return

}

data, _ := json.Marshal(map[string]string{"request_id": id.String()})

fmt.Fprintf(w, "event: updated\ndata: %s\n\n", data)

rc.Flush()

case <-keepalive.C:

rc.SetWriteDeadline(time.Now().Add(sseKeepaliveInterval + 15*time.Second))

fmt.Fprintf(w, ": keepalive\n\n")

rc.Flush()

}

}

The handler uses http.ResponseController to manage per-write deadlines on the long-lived connection. The server's global WriteTimeout is disabled to avoid killing SSE streams, so the handler manages its own deadlines per-flush.

The widget client sets a 45-second timeout (15-second buffer over the server's 30-second keepalive interval). A setInterval every 10 seconds checks Date.now() - lastDataTime; if stalled, it aborts the connection and triggers reconnection. The timer is cleared on all exit paths.

One limitation to acknowledge: the hub is in-process only. In a multi-instance deployment behind a load balancer, a client connected to instance A won't see events published by instance B. The widget falls back to polling in that case. Redis pub/sub or Postgres LISTEN/NOTIFY as a shared event bus is on the roadmap.

The Auth Provider Interface

Authentication is pluggable via a Provider interface:

type Provider interface {

Authenticate(r *http.Request) (*Identity, error)

}

type Identity struct {

UserID string

Roles []string

Permissions []string

TenantID string

}

The built-in TrustProvider extracts identity from headers set by a trusted upstream system (reverse proxy, API gateway, service mesh). Header names are configurable: X-User-ID, X-User-Roles, X-User-Permissions, X-Tenant-ID by default. This is the standard pattern in microservice architectures where authentication is handled at the edge.

Identity is threaded through the request context using private context keys. Private keys prevent external packages from accessing or overwriting identity values. The store layer reads TenantIDFromContext(ctx) to scope queries. The service layer reads UserIDFromContext(ctx) for audit logging and self-approval checks.

The admin console uses a separate JWT-based authentication mechanism for operators. Console JWT auth and API trust auth share no code: they're independent middleware chains on separate route groups. API consumers are applications (identified by upstream auth); console users are humans (identified by username/password, issued a JWT as an httpOnly cookie with SameSite=Strict).

The JWT secret is validated at startup: anything shorter than 32 bytes is rejected with a panic (HS256 with a short secret is trivially brute-forceable). An empty secret auto-generates a random 32-byte key with a warning. The first-run experience uses a setup endpoint that creates the initial operator. It's idempotent; once an operator exists, the setup endpoint returns 409. This avoids the "default admin password" antipattern.

Context-Aware Structured Logging

Quorum uses Go's log/slog with a custom context-aware handler. The handler wraps the base JSON handler and injects request_id, user_id, and tenant_id on every log record from context.

The design problem was import cycles. The logging package can't import the auth package (where UserIDFromContext lives) without creating a circular dependency. The solution is an extractor pattern:

type Extractor struct {

Key string

Extract func(ctx context.Context) string

}

func (h *ContextHandler) Handle(ctx context.Context, record slog.Record) error {

attrs := AttrsFromContext(ctx)

if attrs.RequestID != "" {

record.AddAttrs(slog.String("request_id", attrs.RequestID))

}

for _, ext := range h.extractors {

if v := ext.Extract(ctx); v != "" {

record.AddAttrs(slog.String(ext.Key, v))

}

}

return h.inner.Handle(ctx, record)

}

In main.go, extractors are wired up with auth.UserIDFromContext and auth.TenantIDFromContext. The logging package never imports auth. Every log line from inside a request handler carries user and tenant context automatically, with zero boilerplate in the callers.

The Audit Trail

Every significant state change is recorded in the audit log with the actor, action, timestamp, and optional structured details. The actions tracked: created, approved, rejected, cancelled, stage_advanced, expired, webhook_sent, webhook_failed. Human-initiated actions use the checker or maker ID. Automated actions use "system" as the actor.

func (s *RequestService) audit(ctx context.Context, requestID uuid.UUID, action string, actorID string, details json.RawMessage) {

log := &model.AuditLog{

TenantID: auth.TenantIDFromContext(ctx),

RequestID: requestID,

Action: action,

ActorID: actorID,

Details: details,

}

if err := s.audits.Create(ctx, log); err != nil {

slog.ErrorContext(ctx, "failed to create audit log",

"error", err, "request_id", requestID, "action", action)

}

}

Audit writes are fire-and-forget: errors log at WARN but never block the primary operation. A valid approval shouldn't fail because the audit table had a transient hiccup. The trade-off is that an entry could theoretically be lost during a database blip, but the state change itself has already committed. The audit is supplementary, not authoritative.

Configuration: Reflection-Based Env Overrides

Quorum uses a layered configuration system with three sources, applied in order of increasing precedence: hardcoded defaults, YAML file, environment variables.

The env var names are derived automatically via reflection. A walkStruct() function recursively traverses the Config struct, converting each field path to screaming snake case:

func applyEnvOverrides(cfg *Config) {

walkStruct(reflect.ValueOf(cfg).Elem(), []string{"QUORUM"})

}

func walkStruct(v reflect.Value, prefix []string) {

t := v.Type()

for i := range t.NumField() {

field := t.Field(i)

fv := v.Field(i)

if !field.IsExported() {

continue

}

segment := camelToScreamingSnake(field.Name)

path := append(append([]string{}, prefix...), segment)

switch fv.Kind() {

case reflect.Struct:

if fv.Type() == reflect.TypeFor[time.Duration]() {

applyEnvValue(fv, strings.Join(path, "_"))

} else {

walkStruct(fv, path)

}

case reflect.Map:

continue

default:

applyEnvValue(fv, strings.Join(path, "_"))

}

}

}

Config.Database.MaxOpenConns becomes QUORUM_DATABASE_MAX_OPEN_CONNS. JWTSecret becomes JWT_SECRET (the camelToScreamingSnake function handles consecutive uppercase runs like JWT correctly). A Docker deployment can set env vars and skip the config file entirely. Invalid values log a warning and fall back to the YAML or default value; they don't crash the server.

Health Checks

Quorum exposes a /health endpoint that reports per-component status. Each dependency implements a HealthChecker interface:

type HealthChecker interface {

Name() string

Health(ctx context.Context) error

}

The Check function aggregates all checkers and returns individual component status plus an overall healthy/unhealthy flag. The database pool is the primary checker: it pings the connection.

The Stores struct owns the health checkers. When NewStores creates the database pool, it registers the pool as a health checker in the same call. This single-owner pattern means stores.Close() cleanly shuts down both the stores and the health-checked dependencies. No dangling references, no cleanup ordering issues.

Display Template Resolution

Approval requests carry arbitrary JSON payloads. A fund transfer payload looks nothing like a user provisioning payload. Without display templates, checkers see raw JSON and have to parse it mentally.

Display templates are declarative JSON schemas attached to policies. They define how to extract and format fields from the payload for human consumption.

Dot-Notation Path Walking

Templates reference payload fields using dot-notation paths. Path "account.holder.name" walks data["account"]["holder"]["name"]. If any step yields nil or a non-map type, the path resolves to nil; no panics, just a missing field.

Formatters

Four built-in formatters handle common display cases: currency (adds $, commas, two decimals), date (parses RFC 3339, formats as "Jan 2, 2006"), number (comma-separated thousands), truncate (50 chars with ellipsis).

Title Placeholders

The template title supports {{path}} and {{path|format}} placeholders. A regex matches these patterns, resolves the path, applies the formatter, and substitutes inline. Title: "Transfer {{amount|currency}} to {{recipient.name}}" becomes "Transfer $5,000.00 to Jane Smith".

Batch Items

Some requests represent batch operations. The items section defines a path to an array in the payload, a label path within each element, and fields to extract per item. Templates are validated at policy creation time: invalid JSON, missing labels, unknown formatters are all caught before the policy is stored.

Embeddable Widgets

Quorum ships embeddable web components built with Svelte 5. The main widget, <quorum-approval-panel>, shows request details, stage progress, approve/reject buttons, and an audit trail. Consumers include a single script tag and drop the custom element into their HTML.

Shadow DOM for Style Isolation

Every widget uses shadow: "open" to encapsulate styles. This prevents the host page's CSS from breaking the widget layout and prevents widget CSS from leaking into the host page. All styles must be self-contained, but the guarantee that the widget renders correctly in any host application is worth the slightly larger bundle.

Global Configuration with Getter Functions

Static configuration (hardcoding an API token in an HTML attribute) goes stale when tokens rotate. The QuorumWidgets.configure() function accepts getter functions for dynamic values:

QuorumWidgets.configure({

apiUrl: 'https://quorum.example.com',

token: () => getAccessToken(), // evaluated on every API call

tenantId: () => getCurrentTenant(), // evaluated lazily

});

The MaybeGetter<T> = T | (() => T) type allows both static values and functions. Getters are resolved lazily on every API call via an internal resolve() helper, so token refreshes and tenant switches take effect immediately without re-mounting widgets.

A subtlety: Svelte custom elements mount and run their initialization effects the moment the script loads. If configure() is called after the element is already in the DOM, the widget's initial load() call has no API URL and silently skips. The fix: configure() dispatches a quorum:configured CustomEvent on document. Each widget listens for this event and re-evaluates its config, loading data if the global config is now available.

SSE Integration in Widgets

Widgets subscribe to SSE events for the request they're displaying. When an updated event arrives, the widget re-fetches the full request state via REST and re-renders. The widget monitors SSE connection health with a 45-second timeout: if the server's 30-second keepalive stops arriving, the widget reconnects automatically.

One bug worth documenting: reading the request object inside a Svelte $effect that sets up the SSE connection caused the effect to re-run every time load() created a new object, tearing down and rebuilding the connection in a loop. The fix was tracking a primitive isPending boolean that only changes on actual status transitions, not on every re-render.

Build-Time Modularity

The server binary can be compiled with or without the admin console and embeddable widgets. This is handled via Go build tags and the embed.go / embed_stub.go pattern:

// console/console.go

//go:build console

//go:embed frontend/dist/*

var assets embed.FS

func Handler() http.Handler { /* ... */ }

// console/console_stub.go

//go:build !console

func Handler() http.Handler { return nil }

The server checks for nil before mounting routes. Three compilation modes: go build (API only), go build -tags console (API + admin console), go build -tags "console embed" (full). The API-only binary has no frontend dependencies and a smaller footprint.

The Middleware Stack

The HTTP middleware chain is ordered carefully:

Recoverer <- Panic recovery (chi built-in)

RequestID <- Unique ID per request (chi built-in)

CORS <- Cross-origin headers for widget embedding

Logging <- Structured logging with status, duration, user, tenant

Metrics <- Prometheus counters and histograms (if enabled)

Auth <- Identity extraction (API routes only)

Tenant Validation <- In-memory cache check (API routes only)

Console JWT Auth <- JWT validation (console routes only)

Console Tenant <- Optional ?tenant_id= filter (console data routes only)

Handler <- Business logic

There are two distinct error response functions: writeError for 4xx responses where the message is intentional ("name is required", "unknown tenant"), and writeServerError for 5xx responses where the real error must be logged but never leaked to clients. writeServerError captures method, path, query, user ID, and tenant ID in the log, enough context to diagnose issues without exposing internals to the caller.

This separation exists because of a real bug: the initial logging middleware showed status: 500 with no cause. The actual error was a Postgres type mismatch: an INTERVAL column was being scanned into **string instead of *time.Duration. That bug was invisible until I added writeServerError with full request context. The fix was two lines, but finding it without the error log took significantly longer.

Prometheus Metrics

Quorum exposes Prometheus metrics across four areas.

HTTP. quorum_http_requests_total and quorum_http_request_duration_seconds, labeled by method, path, and status code.

Business. quorum_requests_total (counter by outcome), quorum_requests_pending (gauge), quorum_requests_resolution_duration_seconds (histogram). The resolution duration uses long buckets (up to 24h) because approval requests can be pending for days.

Webhooks. quorum_webhook_deliveries_total (success/failure counter) and quorum_webhook_delivery_duration_seconds.

Authorization hooks. quorum_auth_hook_total and quorum_auth_hook_duration_seconds (outcome and latency). A spike in error outcomes points at a downstream service issue rather than a policy misconfiguration.

The pending gauge enables alerting on queue depth. The resolution histogram enables SLA tracking.

The Expiry Worker

Pending requests can sit indefinitely without auto-expiration. Policies set auto_expire_duration (e.g., "24h"), and the request service computes expires_at at creation time.

A background goroutine ticks at a configurable interval and queries for pending requests where expires_at <= NOW(). For each expired request, it transitions the status to expired, enqueues webhook events within a transaction, and records an audit log with actor_id: "system".

The worker handles the race condition where a request is approved between the expiry query and the status update: the CAS guard (WHERE status = 'pending') catches it, and the worker skips the entry gracefully.

The webhook integration required tenant context injection. The worker processes requests from all tenants, but webhook matching is tenant-scoped. For each expired request, the worker creates a tenant-specific context (auth.WithTenantID(ctx, req.TenantID)) before enqueuing webhooks, so ListByEventAndType finds the correct tenant's subscriptions.

Migration Strategy

Schema changes are versioned SQL files with up/down pairs. Each migration is numbered sequentially: 001_create_policies.up.sql, 002_create_requests.up.sql, etc. Both Postgres and MSSQL have parallel migration sets with dialect-specific SQL.

Key design decisions in the schema:

Partial indexes. The fingerprint index is WHERE status = 'pending', so only active requests occupy index space. Resolved requests are ignored. The outbox claimable index is WHERE status IN ('pending', 'processing').

Composite indexes for tenant queries. Multi-tenant queries filter by tenant_id plus another column (status, type). Single-column indexes force a sequential scan on the second filter. Composite indexes like (tenant_id, status) and (tenant_id, request_type) keep tenant-scoped queries fast as data grows.

JSONB for flexibility. Policy stages, identity fields, and display templates are stored as JSONB. This avoids the n+1 query problem of separate stage tables and allows the schema to evolve without migrations for structural changes.

Unique constraint evolution. The approval table enforces UNIQUE(request_id, checker_id, stage_index): a checker can vote once per stage, but can vote at different stages. Policy uniqueness is UNIQUE(tenant_id, request_type), scoped per tenant.

Postgres INTERVAL for durations. auto_expire_duration is stored as a native INTERVAL type rather than a string. The pgx driver maps this directly to Go's time.Duration. I learned this the hard way: the initial implementation used *string, which broke at runtime with a scan error that was only visible after I added proper error logging.

MSSQL UNIQUEIDENTIFIER endianness. SQL Server swaps the first three groups (4-2-2 bytes) of a UUID relative to Go's RFC 4122 big-endian uuid.UUID. The go-mssqldb driver supports guid conversion=true in the DSN to handle this at the driver level. I inject this parameter automatically via ensureGUIDConversionDSN() so nobody has to remember the byte-ordering quirk.

MSSQL NULL uniqueness. Unlike Postgres (where NULLs are distinct in unique constraints), SQL Server's UNIQUE only allows one NULL row. For nullable columns that need uniqueness when non-null but allow multiple NULLs (like idempotency_key and fingerprint), I use filtered unique indexes: CREATE UNIQUE INDEX ... WHERE column IS NOT NULL.

Testing Strategy

Quorum's test suite has three layers, each catching different classes of bugs.

Unit Tests with Mock Stores

Every store interface has a corresponding mock in internal/testutil/ with configurable function fields. Tests set up only the methods they exercise:

requestStore := &testutil.MockRequestStore{

GetByIDFunc: func(ctx context.Context, id uuid.UUID) (*model.Request, error) {

return req, nil

},

}

Unconfigured methods panic with a descriptive message. This makes it immediately obvious when a test exercises an unexpected code path.

Test fixtures use a builder pattern with variadic overrides: testutil.NewRequest(func(r *model.Request) { r.Status = model.StatusApproved }). The base fixture provides sensible defaults; overrides modify only what the test cares about.

Shared Integration Tests

The internal/store/storetest/ package exports database-agnostic test functions: TestRequestStore, TestApprovalStore, TestPolicyStore, etc. Each function accepts a store interface and exercises every method: CRUD operations, filtering, edge cases, tenant isolation.

Both Postgres and MSSQL integration tests call into the same shared suite with real store instances. This guarantees behavioral parity between database backends without duplicating test logic.

Testcontainers for Real Databases

Integration tests spin up ephemeral database containers via testcontainers-go. A setupPostgres function starts a postgres:16-alpine container, runs all migration scripts in order, connects via pgx, and registers cleanup to terminate the container when the test finishes. The entire lifecycle is managed by Go's testing framework.

Tests are tagged with //go:build integration and excluded from the default make test target. A separate make test-integration runs them, and make test-all runs both.

What's Next

Quorum handles the core approval workflow today. The SSE hub is in-process only and needs a shared event bus for multi-instance deployments. Auth modes beyond "trust" (JWT verification, custom endpoint) are documented in the config but not yet implemented. Console operators all have the same access level; role-based scoping is planned. No rate limiting exists on any endpoint.

The roadmap includes policy versioning, approval delegation, request comments, batch approve/reject, email/Slack notifications, conditional stage logic (skip stages based on payload values), cross-policy fingerprinting, and client SDKs for Go, C# Node.js, and Python.

The goal is the same as when I started: no team should have to build maker-checker from scratch. Define your policies, point your app at Quorum, and focus on your actual product.

Quorum is open source and available on GitHub.